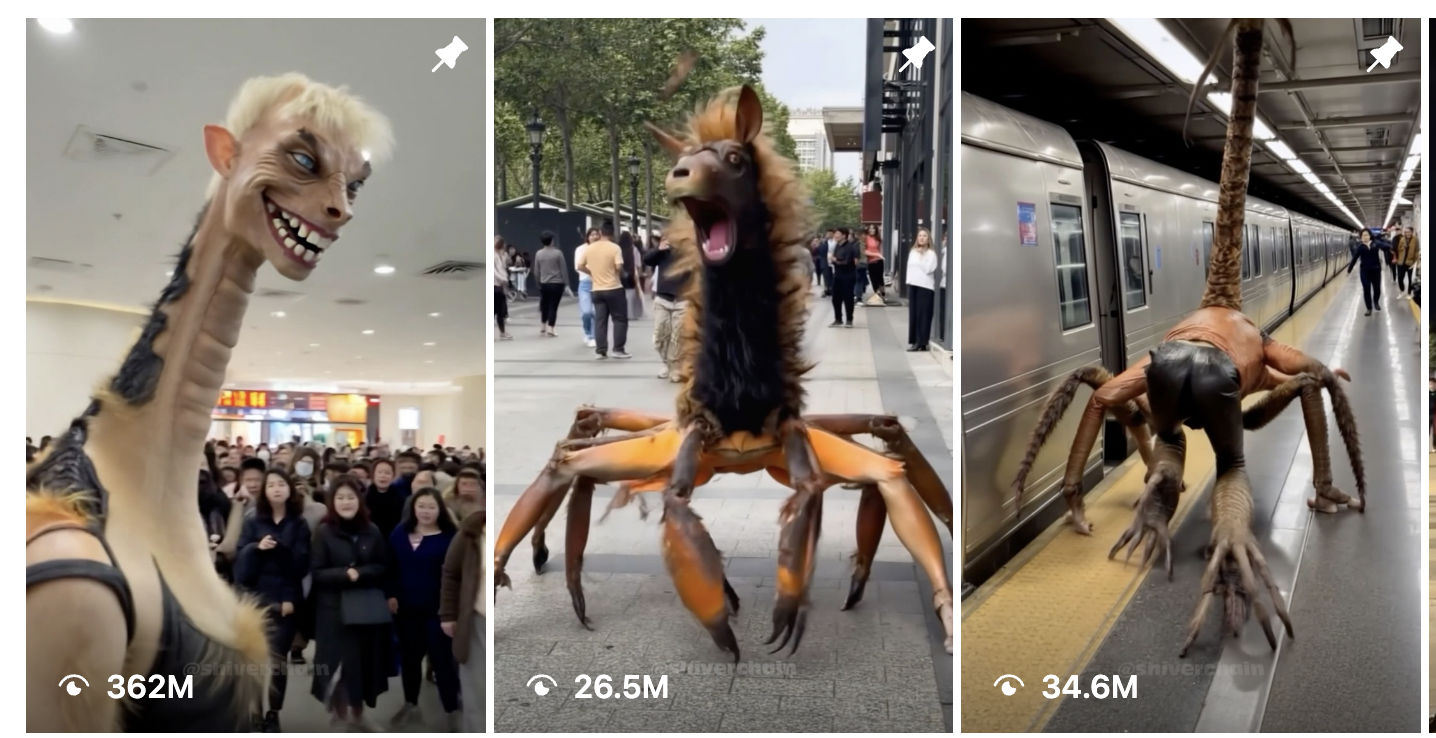

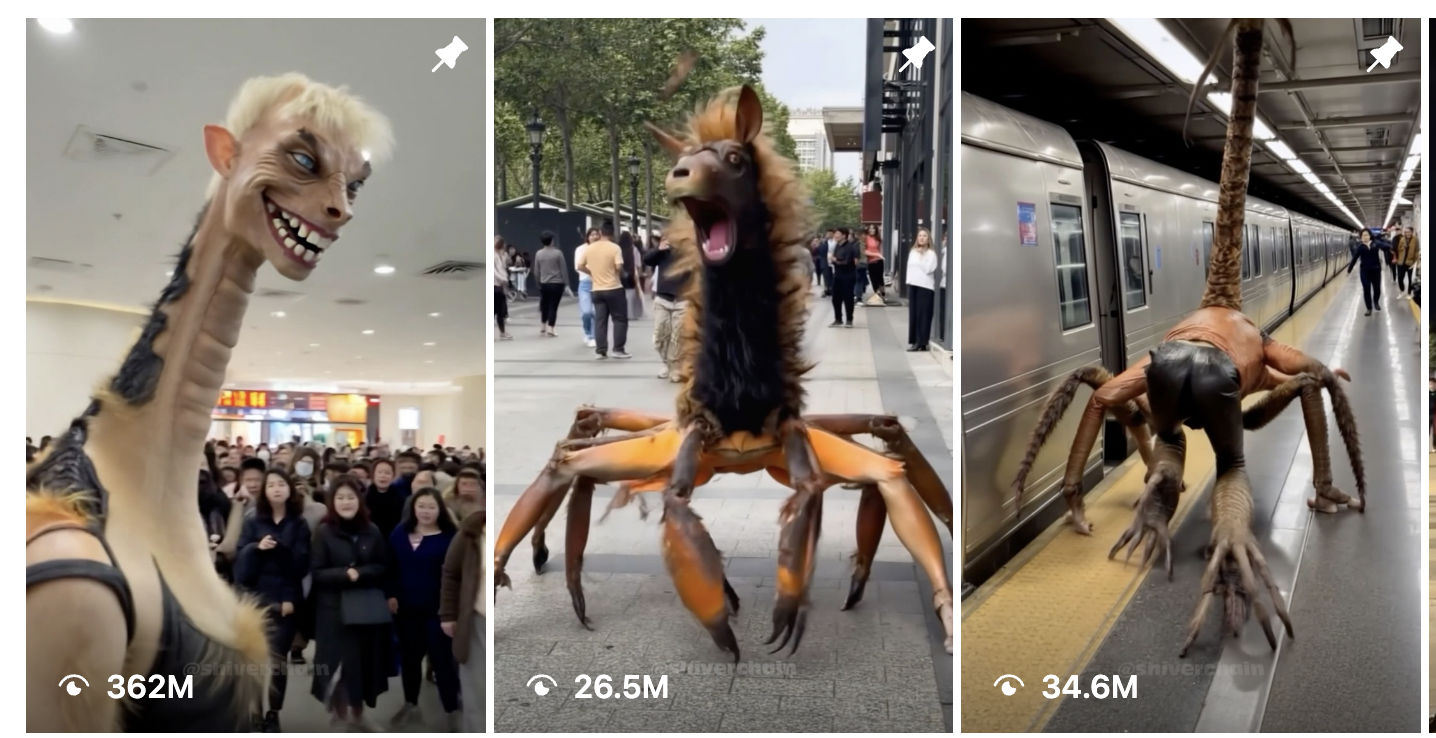

Consider, for a moment, that this AI-generated video of a bizarre creature turning into a spider, turning into a nightmare giraffe inside of a busy mall has been viewed 362 million times. That means this short reel has been viewed more times than every single article 404 Media has ever published, combined and multiplied tens of times.

0:00

/0:11

1×

This is what my Instagram Reels algorithm looks like now:

0:00

/0:36

1×

Any of these Reels could have been and probably was made in a matter of seconds or minutes. Many of the accounts that post them post multiple times per day. There are thousands of these types of accounts posting thousands of these types of Reels and images across every social media platform. Large parts of the SEO industry have pivoted entirely to AI-generated content, as has some of the internet advertising industry. They are using generative AI to brute force the internet, and it is working.

One of the first types of cyberattacks anyone learns about is the brute force attack. This is a type of hack that relies on rapid trial-and-error to guess a password. If a hacker is trying to guess a four-number PIN, they (or more likely an automated hacking tool) will guess 0000, then 0001, then 0002, and so on until the combination is guessed correctly.

As you may be able to tell from the name, brute force attacks are not very efficient, but they are effective. An attacker relentlessly hammers the target until a vulnerability is found or a password is guessed. The hacker is then free to exploit that target once the vulnerability is found.

The best way to think of the slop and spam that generative AI enables is as a brute force attack on the algorithms that control the internet and which govern how a large segment of the public interprets the nature of reality. It is not just that people making AI slop are spamming the internet, it’s that the intended “audience” of AI slop is social media and search algorithms, not human beings.

What this means, and what I have already seen on my own timelines, is that human-created content is getting almost entirely drowned out by AI-generated content because of the sheer amount of it. On top of the quantity of AI slop, because AI-generated content can be easily tailored to whatever is performing on a platform at any given moment, there is a near total collapse of the information ecosystem and thus of "reality" online. I no longer see almost anything real on my Instagram Reels anymore, and, as I have often reported, many users seem to have completely lost the ability to tell what is real and what is fake, or simply do not care anymore.

0:00

/0:47

1×

There is a dual problem with this: It not only floods the internet with shit, crowding out human-created content that real people spend time making, but the very nature of AI slop means it evolves faster than human-created content can, so any time an algorithm is tweaked, the AI spammers can find the weakness in that algorithm and exploit it.

Human creators making traditional YouTube videos, Instagram Reels, or TikToks are often making videos that are designed to appeal to a given platform’s algorithm, but humans are not nearly as good at this as AI. In Mr. Beast’s leaked handbook for employees, he reveals an obsession with the metrics that the YouTube algorithm values: “I spent basically 5 years of my life locked in a room studying virality on YouTube,” he writes. “The three metrics you guys need to care about is Click Thru Rate (CTR), Average View Duration (AVD), and Average View Percentage (AVP).”

Mr. Beast has to care very deeply about these things and needs to have an intuitive understanding of how they work because his videos are very expensive and time consuming to make, and a video that fails to perform is a huge waste of money and effort. Adjusting to what is working on a platform at any given moment is more art than science, and it's a slow process, because human beings have a limited ability to feed the social media content machine. It takes us hours or days to write a single article; a human running an AI can generate dozens of images, photos, or articles in a matter of seconds. This allows a creator using AI to not necessarily have to worry about the quality of their videos, because these metrics (or any metric on any social media platform) can be brute forced. If a video fails it does not matter, because you can make 10 more of them in a matter of seconds.

This means that people running AI-generated accounts can have hundreds or thousands of entries into the algorithmic lottery every day, and can hammer the algorithm once they find something that works. Brute force.

“If you can figure out how to post content at scale, that means you can figure out how to exploit weaknesses at scale,” a former Meta employee who worked on content policy told me when I asked them about the AI spamming strategy for an article in August.

Every single day, I get marketing emails from a 17-year-old YouTube hustler named Daniel Bitton. His message, uniformly, is that it makes no financial sense to spend time making quality YouTube videos, and that making a large quantity of AI-generated Shorts is far more lucrative: "While others spend 5-6 hours making ONE ‘perfect’ video...We're cranking out 8-10 shorts in under 30 minutes. How? By combining two simple ingredients: 1) Cutting-edge AI tools that do 90% of the work. 2) My simple 3-step formula that tells the AI exactly how to create viral Shorts. Total time I spend on average creating a potentially viral Short? 2-4 minutes. Max.”

Another: “YouTube doesn't care about your production value. They care about FEEDING their audience. And their audience is hungry for SHORT content … Ready to start feeding the algorithm what it's actually hungry for?”

Another: “The great thing about posting Shorts is AI. See, it practically does 90% of the work for you. All you need to do is give it a few pointers, press a few buttons, let it create videos for you, and let the algorithm do its thing.”

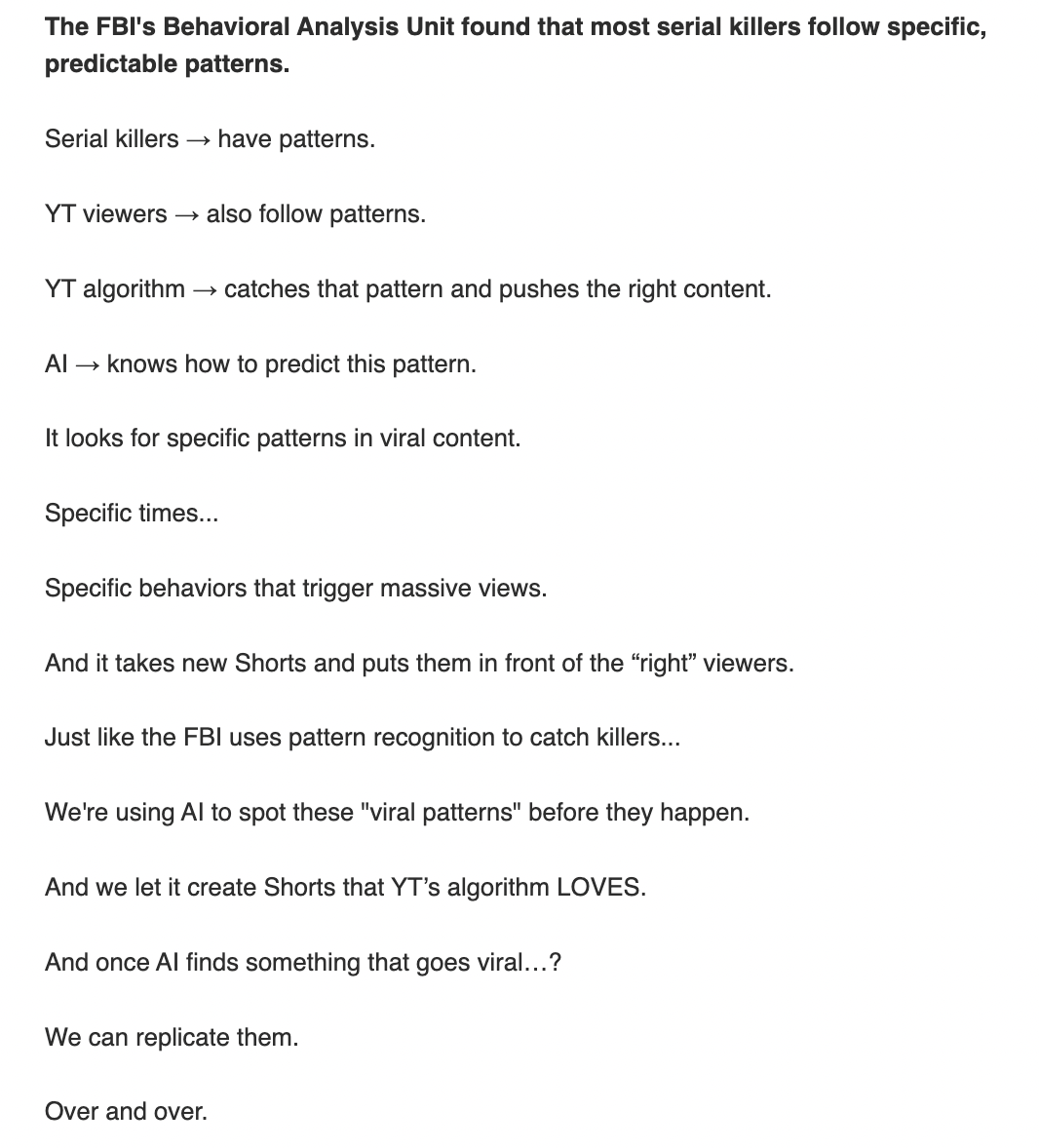

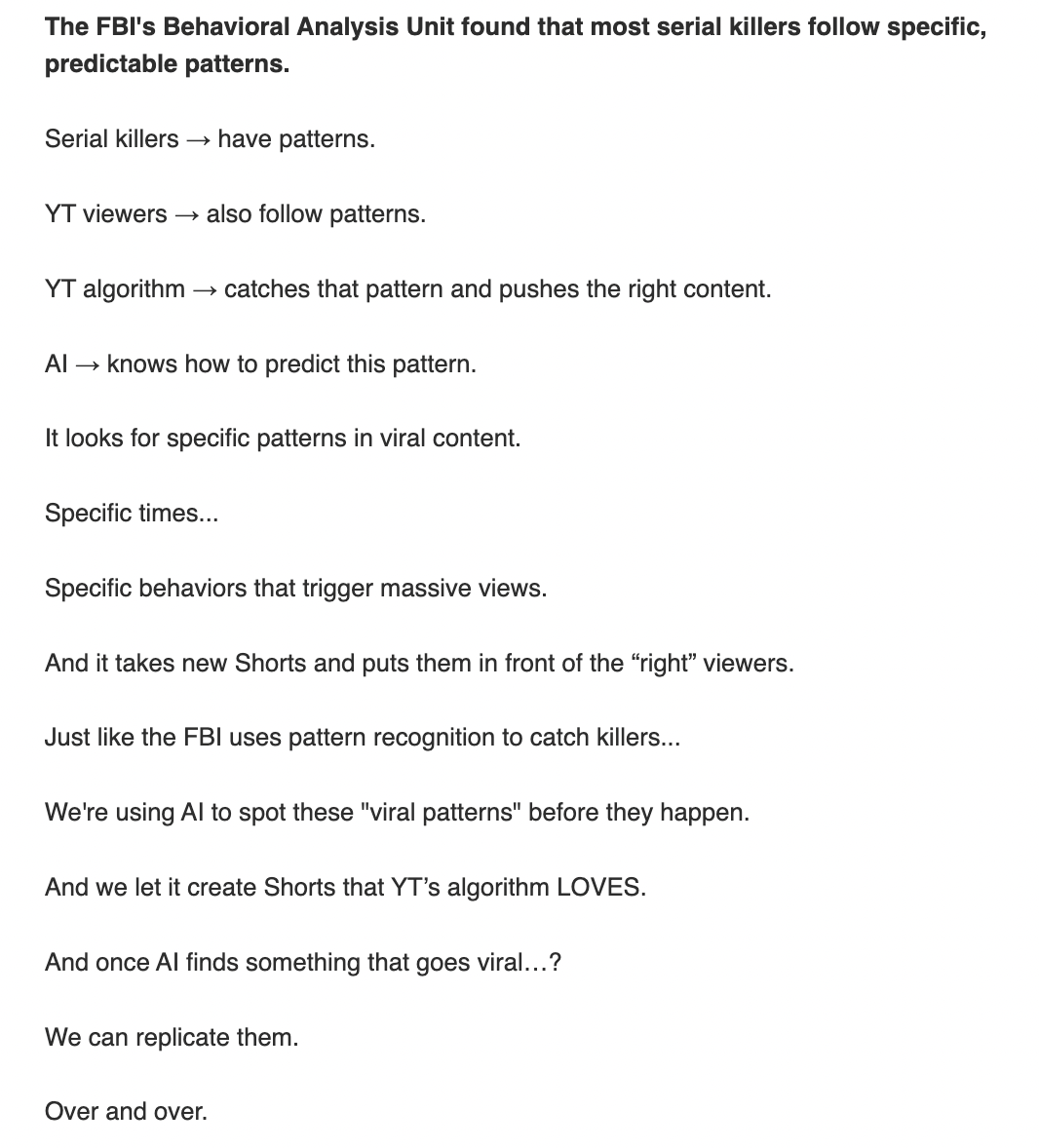

A SCREENGRAB OF ONE OF BITTON'S EMAILS

A SCREENGRAB OF ONE OF BITTON'S EMAILS

In another email, Bitton likens going viral on YouTube to the repeating patterns serial killers follow: “Serial killers → have patterns. YT viewers → also follow patterns. YT algorithm → catches that pattern and pushes the right content. AI → knows how to predict this pattern. We're using AI to spot these ‘viral patterns’ before they happen. And we let it create Shorts that YT’s algorithm LOVES. And once AI finds something that goes viral…? We can replicate them. Over and over. Like clockwork.”

0:00

/0:11

1×

This is what my Instagram Reels algorithm looks like now:

0:00

/0:36

1×

Any of these Reels could have been and probably was made in a matter of seconds or minutes. Many of the accounts that post them post multiple times per day. There are thousands of these types of accounts posting thousands of these types of Reels and images across every social media platform. Large parts of the SEO industry have pivoted entirely to AI-generated content, as has some of the internet advertising industry. They are using generative AI to brute force the internet, and it is working.

One of the first types of cyberattacks anyone learns about is the brute force attack. This is a type of hack that relies on rapid trial-and-error to guess a password. If a hacker is trying to guess a four-number PIN, they (or more likely an automated hacking tool) will guess 0000, then 0001, then 0002, and so on until the combination is guessed correctly.

As you may be able to tell from the name, brute force attacks are not very efficient, but they are effective. An attacker relentlessly hammers the target until a vulnerability is found or a password is guessed. The hacker is then free to exploit that target once the vulnerability is found.

The best way to think of the slop and spam that generative AI enables is as a brute force attack on the algorithms that control the internet and which govern how a large segment of the public interprets the nature of reality. It is not just that people making AI slop are spamming the internet, it’s that the intended “audience” of AI slop is social media and search algorithms, not human beings.

What this means, and what I have already seen on my own timelines, is that human-created content is getting almost entirely drowned out by AI-generated content because of the sheer amount of it. On top of the quantity of AI slop, because AI-generated content can be easily tailored to whatever is performing on a platform at any given moment, there is a near total collapse of the information ecosystem and thus of "reality" online. I no longer see almost anything real on my Instagram Reels anymore, and, as I have often reported, many users seem to have completely lost the ability to tell what is real and what is fake, or simply do not care anymore.

0:00

/0:47

1×

There is a dual problem with this: It not only floods the internet with shit, crowding out human-created content that real people spend time making, but the very nature of AI slop means it evolves faster than human-created content can, so any time an algorithm is tweaked, the AI spammers can find the weakness in that algorithm and exploit it.

Human creators making traditional YouTube videos, Instagram Reels, or TikToks are often making videos that are designed to appeal to a given platform’s algorithm, but humans are not nearly as good at this as AI. In Mr. Beast’s leaked handbook for employees, he reveals an obsession with the metrics that the YouTube algorithm values: “I spent basically 5 years of my life locked in a room studying virality on YouTube,” he writes. “The three metrics you guys need to care about is Click Thru Rate (CTR), Average View Duration (AVD), and Average View Percentage (AVP).”

Mr. Beast has to care very deeply about these things and needs to have an intuitive understanding of how they work because his videos are very expensive and time consuming to make, and a video that fails to perform is a huge waste of money and effort. Adjusting to what is working on a platform at any given moment is more art than science, and it's a slow process, because human beings have a limited ability to feed the social media content machine. It takes us hours or days to write a single article; a human running an AI can generate dozens of images, photos, or articles in a matter of seconds. This allows a creator using AI to not necessarily have to worry about the quality of their videos, because these metrics (or any metric on any social media platform) can be brute forced. If a video fails it does not matter, because you can make 10 more of them in a matter of seconds.

This means that people running AI-generated accounts can have hundreds or thousands of entries into the algorithmic lottery every day, and can hammer the algorithm once they find something that works. Brute force.

“If you can figure out how to post content at scale, that means you can figure out how to exploit weaknesses at scale,” a former Meta employee who worked on content policy told me when I asked them about the AI spamming strategy for an article in August.

The McDonald's Theory of YouTube Success

"Brute force" is not just what I have noticed while reporting on the spammers who flood Facebook, Instagram, TikTok, YouTube, and Google with AI-generated spam. It is the stated strategy of the people getting rich off of AI slop.Every single day, I get marketing emails from a 17-year-old YouTube hustler named Daniel Bitton. His message, uniformly, is that it makes no financial sense to spend time making quality YouTube videos, and that making a large quantity of AI-generated Shorts is far more lucrative: "While others spend 5-6 hours making ONE ‘perfect’ video...We're cranking out 8-10 shorts in under 30 minutes. How? By combining two simple ingredients: 1) Cutting-edge AI tools that do 90% of the work. 2) My simple 3-step formula that tells the AI exactly how to create viral Shorts. Total time I spend on average creating a potentially viral Short? 2-4 minutes. Max.”

Another: “YouTube doesn't care about your production value. They care about FEEDING their audience. And their audience is hungry for SHORT content … Ready to start feeding the algorithm what it's actually hungry for?”

Another: “The great thing about posting Shorts is AI. See, it practically does 90% of the work for you. All you need to do is give it a few pointers, press a few buttons, let it create videos for you, and let the algorithm do its thing.”

A SCREENGRAB OF ONE OF BITTON'S EMAILS

A SCREENGRAB OF ONE OF BITTON'S EMAILSIn another email, Bitton likens going viral on YouTube to the repeating patterns serial killers follow: “Serial killers → have patterns. YT viewers → also follow patterns. YT algorithm → catches that pattern and pushes the right content. AI → knows how to predict this pattern. We're using AI to spot these ‘viral patterns’ before they happen. And we let it create Shorts that YT’s algorithm LOVES. And once AI finds something that goes viral…? We can replicate them. Over and over. Like clockwork.”